On the last few weeks I’ve been looking at KWin more closely than in the past. It’s definitely a special beast within KDE and I figured it could be useful to give some hints on how to develop and test it.

When developing something, first step is always to compile and get the code installed and usable. It’s especially delicate because when we mess up our system becomes quite unusable so it needs to be done with care. To prevent major damage, we can probably try installing it into a separate prefix (See this blog post, change kate for kwin).

Second step is to make sure that modifying the code will modify the behaviour you perceive. This is what we’ll focus on in this piece.

Bear in mind most of the things I’m saying here are possibly obvious and not news, but it’s still good to have it written in case you feel like building on this (not fun to come up with) experience.

Testing KWin, Nested

That’s the first that we’ll probably try. It’s simple: we run a kwin_wayland instance within our system, it shows a window with whatever we’re running inside. I find it useful to run a konsole inside to trigger other applications. It’s also possible to point processes run elsewhere to that socket too but I haven’t really come across the necessity.

We’ll run something like:

kwin_wayland --exit-with-session konsole

Or even:

kwin_wayland --exit-with-session "konsole -e kate"

In any case, a good old kwin_wayland --help. The --exit-with-session bit isn’t necessary but it makes it useful to easily close it.

One of the biggest advantages of using it like this is that it’s very easy to run through gdb (just prefix with gdb --args kwin_wayland...), so still something to remember.

This works, but it’s usefulness is limited because important parts won’t be run: input, which will come from the actual session rather than from the kernel; and drm, which will go into the virtual session than to the kernel for the same reason.

TL;DR: if you’re modifying KWin to talk to the kernel, this won’t work.

Testing KWin, standalone

Next thing to try is to run it separately. Now you’ll want to move to a tty (e.g. ctrl+alt+F2) and run the same command as earlier. Now we’ll run the same code as above. Instead of getting a window now we get it full screen and using DRM and libinput straight from the source. *yah!*

It’s still quite uncomfortable though: the output will be going to the tty which we can’t see because we have kwin on top and if it crashes we’re at a loss. Also running it with gdb is a pain because we can’t interact with it.

That’s why for this I’d recommend to ssh into the system from another one. I’ve found the sweet spot there to use a combination of ssh and tmux (screen should work as well, there’s tons of doc on how to use either online). Using ssh we find 2 main problems: if the connection breaks the whole set up is left in a weird situation and from ssh we don’t really access the tty.

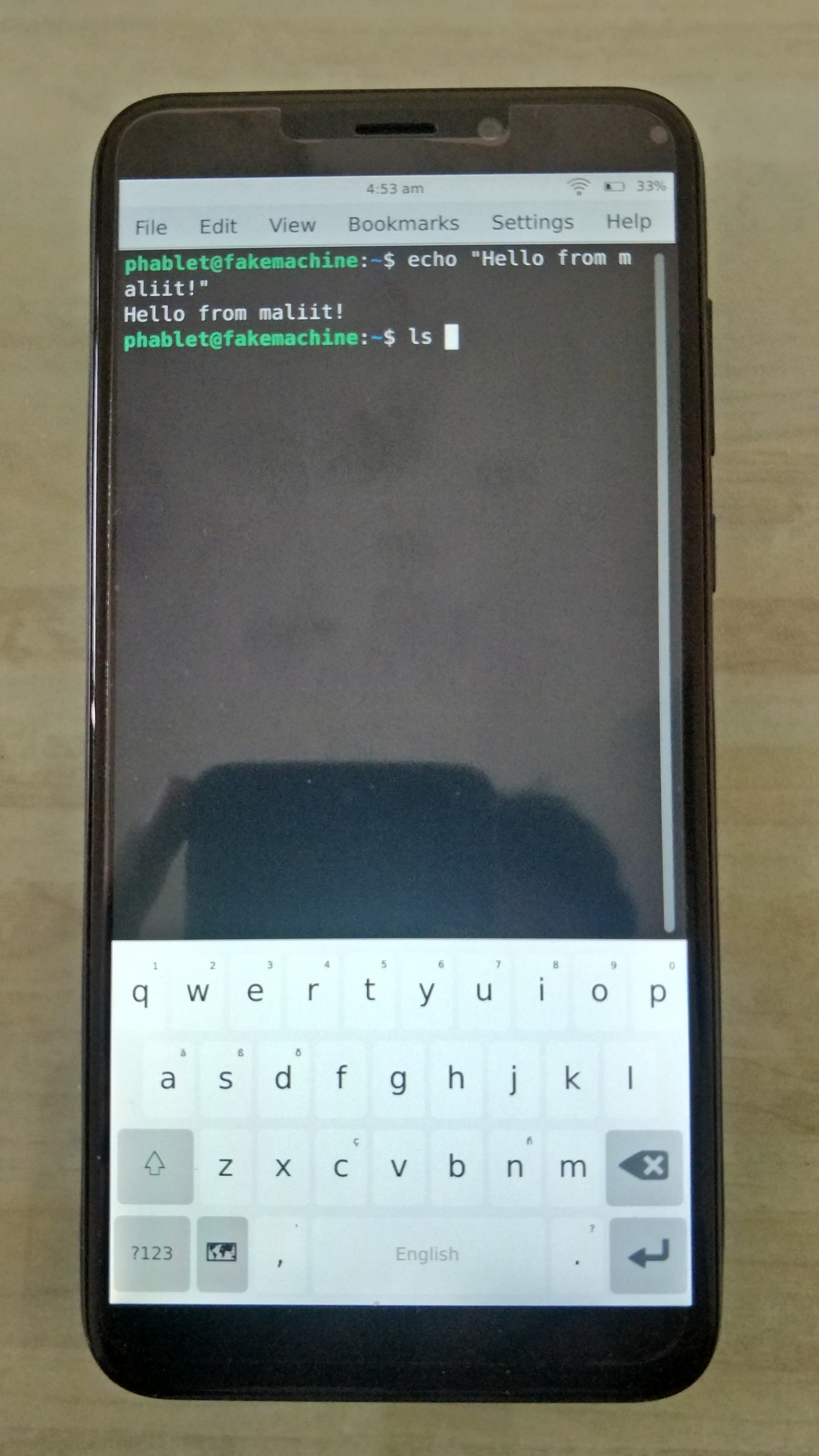

If you don’t have 2 computers available, you can ssh from a phone as well, should be good enough, especially if you can get an external keyboard, although a nice screen is always useful.

So what we’ll do is:

- Make sure our system has sshd running, check the IP

host$ tmuxothercomputer$ ssh $IPothercomputer$ tmux a

And then we’re seeing the same on both, and we can run kwin_wayland on either and everything will work perfectly: we’ll see debugs we may be adding, we’ll get to kill it with Ctrl+C if we please, we can run gdb, add breakpoints. Anything. Full fun.

Problem being it’s just KWin and there’s more to life than a black screen with a dancing window.

Testing a full Plasma Session

To start Plasma Wayland from a tty we run startplasma-wayland. This gives us a complete Plasma instance on Wayland. The same as if we were running it from a display manager like SDDM. Running it from another system tmux still has advantages, like we’ll get to see the debug output. Starting a kwin_wayland session under gdb is way beyond this blog post and barely useful considering the standalone approach I mentioned earlier. What we can do though is to attach to the running gdb.

From the other system (on tmux or not, although I find it useful to just put it in another tab) we can run sudo gdb --pid `pidof kwin_wayland` and it will attach. Then you can add your gdb business: breakpoints, make it crash, look at backtraces, print variables or just stare at it hoping things get fixed automatically.

Other

There’re other things that have proved useful to me like running it on valgrind or with a custom mesa build + modified LD_LIBRARY_PATHS. This works as with any other project, except it doesn’t by default.

KWin has a safety system set in place that prevents running it on emulators like valgrind and prevents LD_LIBRARY_PATHS from being tampered with. Which is great in general but prevents us to do certain things.

To address this in our build, we can comment out the code in kwin/CMakeLists.txt where ${SETCAP_EXECUTABLE} is called on kwin_wayland. You’ll need to make sure kwin_wayland is recreated, so remember to host$ rm bin/kwin_wayland. This bit here made me waste a fair amount of hours mind you, so it’s worth keeping in mind.

It’s also worth remembering there’s a ton of debugging tools that can be used. Here’s some:

WAYLAND_DEBUG=1 environment variable to see how the wayland protocols are flowing.- gpuvis, useful to get an idea of how rendering is performing.

Also note that all of this applies for Plasma Mobile as well, where it’s also useful for applications as well, where it’s most convenient since you get a proper keyboard and screen to look at debug output and gdb traces.

Hope this was any useful to you.

Happy hacking, stay safe!